Some of the most successful and lucrative online scams employ a “low-and-slow” approach — avoiding detection or interference from researchers and law enforcement agencies by stealing small bits of cash from many people over an extended period. Here’s the story of a cybercrime group that compromises up to 100,000 email inboxes per day, and apparently does little else with this access except siphon gift card and customer loyalty program data that can be resold online.

The data in this story come from a trusted source in the security industry who has visibility into a network of hacked machines that fraudsters in just about every corner of the Internet are using to anonymize their malicious Web traffic. For the past three years, the source — we’ll call him “Bill” to preserve his requested anonymity — has been watching one group of threat actors that is mass-testing millions of usernames and passwords against the world’s major email providers each day.

Bill said he’s not sure where the passwords are coming from, but he assumes they are tied to various databases for compromised websites that get posted to password cracking and hacking forums on a regular basis. Bill said this criminal group averages between five and ten million email authentication attempts daily, and comes away with anywhere from 50,000 to 100,000 of working inbox credentials.

In about half the cases the credentials are being checked via “IMAP,” which is an email standard used by email software clients like Mozilla’s Thunderbird and Microsoft Outlook. With his visibility into the proxy network, Bill can see whether or not an authentication attempt succeeds based on the network response from the email provider (e.g. mail server responds “OK” = successful access).

You might think that whoever is behind such a sprawling crime machine would use their access to blast out spam, or conduct targeted phishing attacks against each victim’s contacts. But based on interactions that Bill has had with several large email providers so far, this crime gang merely uses custom, automated scripts that periodically log in and search each inbox for digital items of value that can easily be resold.

And they seem particularly focused on stealing gift card data.

“Sometimes they’ll log in as much as two to three times a week for months at a time,” Bill said. “These guys are looking for low-hanging fruit — basically cash in your inbox. Whether it’s related to hotel or airline rewards or just Amazon gift cards, after they successfully log in to the account their scripts start pilfering inboxes looking for things that could be of value.”

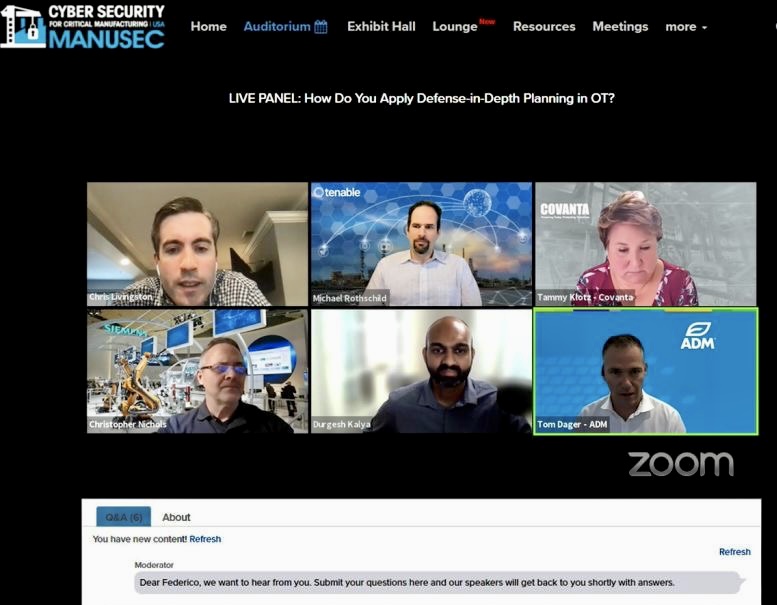

A sample of some of the most frequent search queries made in a single day by the gift card gang against more than 50,000 hacked inboxes.

According to Bill, the fraudsters aren’t downloading all of their victims’ emails: That would quickly add up to a monstrous amount of data. Rather, they’re using automated systems to log in to each inbox and search for a variety of domains and other terms related to companies that maintain loyalty and points programs, and/or issue gift cards and handle their fulfillment.

Why go after hotel or airline rewards? Because these accounts can all be cleaned out and deposited onto a gift card number that can be resold quickly online for 80 percent of its value.

“These guys want that hard digital asset — the cash that is sitting there in your inbox,” Bill said. “You literally just pull cash out of peoples’ inboxes, and then you have all these secondary markets where you can sell this stuff.”

Bill’s data also shows that this gang is so aggressively going after gift card data that it will routinely seek new gift card benefits on behalf of victims, when that option is available. For example, many companies now offer employees a “wellness benefit” if they can demonstrate they’re keeping up with some kind of healthy new habit, such as daily gym visits, yoga, or quitting smoking.

Bill said these crooks have figured out a way to tap into those benefits as well.

“A number of health insurance companies have wellness programs to encourage employees to exercise more, where if you sign up and pledge to 30 push-ups a day for the next few months or something you’ll get five wellness points towards a $10 Starbucks gift card, which requires 1000 wellness points,” Bill explained. “They’re actually automating the process of replying saying you completed this activity so they can bump up your point balance and get your gift card.”

The Gift Card Gang’s Footprint

How do the compromised email credentials break down in terms of ISPs and email providers? There are victims on nearly all major email networks, but Bill said several large Internet service providers (ISPs) in Germany and France are heavily represented in the compromised email account data.

“With some of these international email providers we’re seeing something like 25,000 to 50,000 email accounts a day get hacked,” Bill said. “I don’t know why they’re getting popped so heavily.”

That may sound like a lot of hacked inboxes, but Bill said some of the bigger ISPs represented in his data have tens or hundreds of millions of customers.

Measuring which ISPs and email providers have the biggest numbers of compromised customers is not so simple in many cases, nor is identifying companies with employees whose email accounts have been hacked.

This kind of mapping is often more difficult than it used to be because so many organizations have now outsourced their email to cloud services like Gmail and Microsoft Office365 — where users can access their email, files and chat records all in one place.

“It’s a little complicated with Office 365 because it’s one thing to say okay how many Hotmail connections are you seeing per day in all this credential-stuffing activity, and you can see the testing against Hotmail’s site,” Bill said. “But with the IMAP traffic we’re looking at, the usernames being logged into are any of the million or so domains hosted on Office365, many of which will tell you very little about the victim organization itself.”

On top of that, it’s also difficult to know how much activity you’re not seeing.

Looking at the small set of Internet address blocks he knows are associated with Microsoft 365 email infrastructure, Bill examined the IMAP traffic flowing from this group to those blocks. Bill said that in the first week of April 2021, he identified 15,000 compromised Office365 accounts being accessed by this group, spread over 6,500 different organizations that use Office365.

“So I’m seeing this traffic to just like 10 net blocks tied to Microsoft, which means I’m only looking at maybe 25 percent of Microsoft’s infrastructure,” Bill explained. “And with our puny visibility into probably less than one percent of overall password stuffing traffic aimed at Microsoft, we’re seeing 600 Office accounts being breached a day. So if I’m only seeing one percent, that means we’re likely talking about tens of thousands of Office365 accounts compromised daily worldwide.”

In a December 2020 blog post about how Microsoft is moving away from passwords to more robust authentication approaches, the software giant said an average of one in every 250 corporate accounts is compromised each month. As of last year, Microsoft had nearly 240 million active users, according to this analysis.

“To me, this is an important story because for years people have been like, yeah we know email isn’t very secure, but this generic statement doesn’t have any teeth to it,” Bill said. “I don’t feel like anyone has been able to call attention to the numbers that show why email is so insecure.”

Bill says that in general companies have a great many more tools available for securing and analyzing employee email traffic when that access is funneled through a Web page or VPN, versus when that access happens via IMAP.

“It’s just more difficult to get through the Web interface because on a website you have a plethora of advanced authentication controls at your fingertips, including things like device fingerprinting, scanning for http header anomalies, and so on,” Bill said. “But what are the detection signatures you have available for detecting malicious logins via IMAP?”

Microsoft declined to comment specifically on Bill’s research, but said customers can block the overwhelming majority of account takeover efforts by enabling multi-factor authentication.

“For context, our research indicates that multi-factor authentication prevents more than 99.9% of account compromises,” reads a statement from Microsoft. “Moreover, for enterprise customers, innovations like Security Defaults, which disables basic authentication and requires users to enroll a second factor, have already significantly decreased the proportion of compromised accounts. In addition, for consumer accounts, adding a second authentication factor is required on all accounts.”

A Mess That’s Likely to Stay That Way

Bill said he’s frustrated by having such visibility into this credential testing botnet while being unable to do much about it. He’s shared his data with some of the bigger ISPs in Europe, but says months later he’s still seeing those same inboxes being accessed by the gift card gang.

The problem, Bill says, is that many large ISPs lack any sort of baseline knowledge of or useful data about customers who access their email via IMAP. That is, they lack any sort of instrumentation to be able to tell the difference between legitimate and suspicious logins for their customers who read their messages using an email client.

“My guess is in a lot of cases the IMAP servers by default aren’t logging every search request, so [the ISP] can’t go back and see this happening,” Bill said.

Confounding the challenge, there isn’t much of an upside for ISPs interested in voluntarily monitoring their IMAP traffic for hacked accounts.

“Let’s say you’re an ISP that does have the instrumentation to find this activity and you’ve just identified 10,000 of your customers who are hacked. But you also know they are accessing their email exclusively through an email client. What do you do? You can’t flag their account for a password reset, because there’s no mechanism in the email client to affect a password change.”

Which means those 10,000 customers are then going to start receiving error messages whenever they try to access their email.

“Those customers are likely going to get super pissed off and call up the ISP mad as hell,” Bill said. “And that customer service person is then going to have to spend a bunch of time explaining how to use the webmail service. As a result, very few ISPs are going to do anything about this.”

Indictators of Compromise (IoCs)

It’s not often KrebsOnSecurity has occasion to publish so-called “indicators of compromise” (IoC)s, but hopefully some ISPs may find the information here useful. This group automates the searching of inboxes for specific domains and trademarks associated with gift card activity and other accounts with stored electronic value, such as rewards points and mileage programs.

This file includes the top inbox search terms used in a single 24 hour period by the gift card gang. The numbers on the left in the spreadsheet represent the number of times during that 24 hour period where the gift card gang ran a search for that term in a compromised inbox.

Some of the search terms are focused on specific brands — such as Amazon gift cards or Hilton Honors points; others are for major gift card networks like CashStar, which issues cards that are white-labeled by dozens of brands like Target and Nordstrom. Inboxes hacked by this gang will likely be searched on many of these terms over the span of just a few days.